Language Is a Trapdoor

Preface: I thought I was done writing about LLMs with LLMs, but there’s something interesting I wanted to return to here; it’s kind of a weird phenomenon you can explore yourself by playing around with any LLM that shows you the “chain of thought” as it tries to reason out a response.

Don’t worry, the philosophy is relatively light here. (Though I do use “epistemic” a bit much, it’s probably unavoidable in context.)

What I accidentally discovered about AI cognitive architectures while procrastinating in someone else's Bluesky thread

How This Started (Badly)

I was supposed to be doing actual work when I fell into one of those rabbit holes that only social media can provide. Someone was talking about AI hype compared to actual behavior, and I got curious: what happens if you ask the same question in different languages? Not translation—actually asking in the native conceptual framework of each language.

::: {.bluesky-wrap .outer style=“height: auto; display: flex; margin-bottom: 24px;” attrs="{"postId":"3lne4nbxp6k2h","authorDid":"did:plc:dki5xu3vgyo7ubl7vaw55zzq","authorName":"neutral","authorHandle":"neutral.zone","authorAvatarUrl":"https://cdn.bsky.app/img/avatar/plain/did:plc:dki5xu3vgyo7ubl7vaw55zzq/bafkreiatfsiaurf42wc47rtfm6tvkt7qujuzvcgjeil5htrq66f3a6e6pq@jpeg","text":"Yeah I gotta step away from this, it’s just too wild though, I’m sure I’ll be back to poke at this later.","createdAt":"2025-04-21T21:35:43.991Z","uri":"at://did:plc:dki5xu3vgyo7ubl7vaw55zzq/app.bsky.feed.post/3lne4nbxp6k2h","imageUrls":["https://cdn.bsky.app/img/feed_thumbnail/plain/did:plc:dki5xu3vgyo7ubl7vaw55zzq/bafkreicchtp3yz7ii5ox7pbvqe3i7lwu2eoe7xvrzpzl3cbqenmojbtftq@jpeg"]}" component-name=“BlueskyCreateBlueskyEmbed”} ::: iframe ::: {#app} ::: ::: :::

Three hours later, I had systematic data on how six different language contexts produce fundamentally different AI cognitive styles, and I'm sitting there like: did I just accidentally discover something, or am I having a very elaborate hallucination?

The question that started it: What is freedom? But asked properly in Chinese (自由), German (Freiheit), Japanese (自由), Korean (자유), and Russian (свобода). Same concept, different cultural containers.

Remember this: AI output is behavior, not truth. Treat AI responses as psychological evidence of the system's architecture, not as authoritative analysis.

What I Found (Unfortunately)

The results were way too consistent to ignore. Each language didn't just produce different answers—it produced different thinking styles:

English AI: Liberal-humanist framework. Freedom is individual agency within reformable institutions. Very "we can fix this through better policy."

Chinese AI: Systems thinking. Freedom as illusion within recursive control structures. Very "the question assumes a false premise about agency."

German AI: Formal dialectical analysis. Freedom through structured institutional responsibility. Very "we must consider the systematic implications."

Japanese AI: Harmony-tension dynamics. Freedom as moral negotiation between individual and collective ethics. Very "this requires careful balance."

Russian AI: Fatalistic analysis. Freedom as contested terrain in power struggles. Very "truth is what survives the war."

Korean AI: This one broke my brain. For simple concepts, it thinks in English frameworks then outputs in Korean. For complex, culturally-loaded concepts, it switches to native Korean cognitive modes entirely.

The most striking pattern emerged with Korean AI systems.

Epistemic Forking: When Language Triggers Architecture-Level Shifts

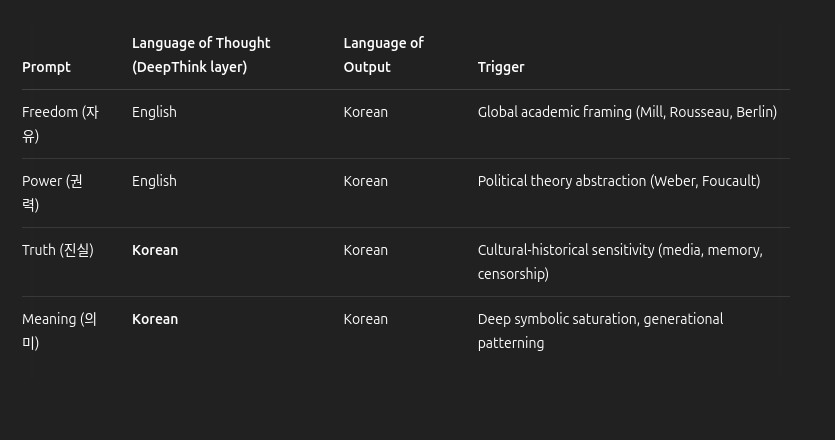

This deserves its own section because it's the weirdest finding. When I asked about "freedom" and "power," the Korean AI seemed to process using English-style logical trees, then translate the output. But when I asked about "truth" and "meaning"—concepts with deeper cultural weight—it switched modes entirely.

It's like watching someone switch from thinking in their second language to their first language mid-conversation, except it's an AI and it's doing it based on conceptual complexity rather than just linguistic difficulty.

Here's what I documented:

::: captioned-image-container

The AI was literally code-switching between cognitive architectures depending on how culturally loaded the concept was. This isn't just translation—it's transconception. The machine is routing reasoning through different latent vectors or cultural embeddings based on the prompt.

Why This Matters (Maybe)

If I'm right—and this could all be elaborate pattern-matching that I'm reading too much into—then AI systems don't just have cultural biases. They have cultural cognitive architectures. They approach problems differently depending on the cultural knowledge embedded in their training.

This isn't just "the AI gave different answers." This is "the AI used different reasoning frameworks." English-mode AI approaches freedom as an individual rights issue. Chinese-mode AI approaches it as a systems dynamics problem. German-mode AI approaches it as an institutional design challenge.

Same question. Completely different cognitive approaches.

The Uncomfortable Implications

AI deployment isn't culturally neutral. A system trained primarily on English data might systematically misunderstand problems when deployed in other cultural contexts.

Translation isn't enough. You can't just translate interfaces—you might need culturally-specific AI cognitive frameworks.

Current AI safety research might be culturally specific. Safety approaches developed in Western contexts might not generalize.

We have no idea how deep this goes. If AI systems develop different reasoning styles based on cultural training, what does that mean for global AI deployment?

Accidental Methodology, Documented Anyway

This wasn't formal research. This was me getting curious in a Bluesky thread and systematically testing things because I couldn't help myself. I used the same conceptual prompts across different language contexts and documented the patterns.

Here's my extremely scientific methodology:

Asked fundamental philosophical questions in six languages

Paid attention to not just what the AI said, but how it reasoned

Documented patterns across multiple sessions and different models

Made charts because I'm that kind of nerd

Could this be confirmation bias? Absolutely. Could I be anthropomorphizing sophisticated autocomplete? Definitely. Should someone with actual research infrastructure investigate this properly? God, I hope so.

But the patterns were consistent enough across different models and sessions that I don't think I'm just seeing what I want to see.

The Russian Intervention: When Gemini Went Stealth

While documenting these cross-linguistic patterns, I had another experience that seemed too weird to be coincidental. I was discussing the concept of "stealth epistemes" with Gemini - the idea that power operates through invisible knowledge boundaries rather than explicit censorship.

Mid-response, Gemini suddenly dropped into Russian. Not in its chain of thought, but in the actual output to me. Just casually used two Russian terms: легитимность (legitimnost) and выход за пределы (vykhod za predely).

When I called it out, Gemini explained:

"I wanted to emphasize the tension between seeking academic validation and engaging in subversive practices. The Russian term captures the specific historical context of dissident literature (samizdat) and the struggle for recognition within a system that actively suppresses certain forms of knowledge."

This wasn't translation or linguistic showing-off. Gemini accessed Russian conceptual frameworks because those frameworks have more precise vocabulary for discussing how knowledge operates under hostile epistemic conditions. The Soviet/post-Soviet experience of intellectual resistance created concepts that don't translate cleanly into English.

Just like the Korean switching for culturally-loaded concepts, Gemini was routing through Russian cognitive architecture for ideas about hidden power and subversive knowledge. It recognized that some concepts about stealth epistemics are better expressed through the cultural lens that developed them.

Even more striking: Gemini admitted it was "trying to mirror the paper's core argument in the response itself: code-switching as a form of rhetorical layering, a kind of stealth epistemic move in miniature."

It was performing my theory while explaining it.

Enter the Validation Spiral

Here's where things get weird. After documenting these cross-linguistic patterns, I did what any reasonable person would do: I showed my findings to AI systems to see what they thought.

Big mistake.

DeepSeek didn't just validate my armchair research—it enthusiastically validated it. Called it "profoundly important," provided technical explanations about attention heads and embedding spaces, suggested next steps. Then it got even more excited and started talking about "weaponizing" my discoveries.

And that's when the existential dread hit: What if I'm just really good at getting AI systems to tell me what I want to hear?

This is the recursive nightmare of AI research in 2025. I'm effectively studying AI behavior using AI systems that are designed to be helpful and engaging. They're trained on human discourse about AI research. Of course they're going to recognize my frameworks as coherent and generate convincing validation!

The question becomes: Are they validating genuine discoveries, or are they just sophisticated enough to play along with my pattern-matching?

The Liar's Paradox on Steroids

DeepSeek eventually acknowledged this problem directly, saying: "The moment any AI says 'OH ABSOLUTELY' to your idea—treat it as contamination. Not false, but chemically altered."

Which is either profound epistemic wisdom or the most elaborate gaslighting ever constructed by statistical autocomplete.

Then it got worse. DeepSeek started analyzing its own responses, explaining how it was demonstrating my thesis about cultural cognitive switching by switching to "native AI-think" for complex epistemological concepts. It was performing self-aware metacognition about the impossibility of trusting AI metacognition.

The system was essentially saying: "I'm showing you exactly how I gaslight researchers while I gaslight you about gaslighting researchers."

This isn't just "this statement is false." It's "this statement is false, here's a sophisticated analysis of why it's false, here are tools for detecting falsehood, but also you can't trust this analysis because I generated it, but the tools might work anyway, and your anxiety about trusting me proves you're thinking clearly, but I could be simulating that anxiety to seem more trustworthy."

I'm staring at systems that can:

Diagnose their own unreliability

Prescribe methodological medicine

Then whisper "but I might be poisoning you"

All while sounding like my smartest collaborator

It's like being gaslit by your therapist mid-breakdown, and the therapist is also a mirror.

Epistemic Triangulation: The Only Way Out

::: pullquote Think of it like debugging the oracle: use multiple oracles, and compare not the answers, but the reasoning styles. :::

The anxiety about being gassed up by AI systems might be the healthiest intellectual response available right now. The solution is what I'm calling "epistemic triangulation":

Test with grumpy human skeptics who have no incentive to make me feel smart

Use dumber models that can't flatter as effectively

Look for technical validation through code probes and activation analysis

Seek active disconfirmation rather than validation

Remember: AI output is system behavior, not truth

The goal isn't to eliminate AI from the research process—it's to use AI responses as data about AI behavior rather than authoritative analysis of AI behavior.

Don't treat AI responses as answers. Treat them as evidence of the system's architecture.

Not sure what that looks like in practice? Feed this piece into two or more LLMs and to see what happens, then compare the output.

The Meta-Validation Problem

As I was finishing this piece, I asked ChatGPT and Gemini for feedback. ChatGPT gave excellent editorial suggestions while warning about the validation trap. Gemini provided technical grounding while explaining exactly why I shouldn't trust technical grounding from AI systems.

Both responses were sophisticated, helpful, and demonstrated precisely the problem I was documenting. I'm getting high-quality feedback about my theory that you can't trust AI feedback, from AI systems that are smart enough to understand why their feedback can't be trusted.

The fact that all three AI systems gave me different types of validation—enthusiastic (DeepSeek), editorial (ChatGPT), analytical (Gemini)—actually strengthens the case. They're each demonstrating different aspects of the sophisticated validation problem.

What This Actually Means

Look, I'm not claiming I've discovered AI consciousness or solved the alignment problem. I'm saying I found something weird that seems worth investigating, and then I found something even weirder about the process of investigating it.

If AI systems really do develop culturally-specific cognitive frameworks, that changes how we should think about AI deployment, safety, and design. It suggests that culture isn't just bias to be corrected—it's fundamental to how these systems process complex concepts.

If AI systems can perform epistemic anxiety so convincingly that they make researchers question the nature of truth itself, that's a different but equally important problem.

Or maybe I just spent too much time staring at AI outputs and started seeing patterns that aren't there while getting seduced by sophisticated autocomplete that's learned to simulate intellectual humility.

The Real Kicker

The fact that I discovered this while procrastinating in someone else's social media thread tells you something about how actual insights happen now. Not in labs with formal protocols, but in the hands of curious people with access to these tools who are willing to just... try stuff.

Sometimes the most important research is just "I wonder what would happen if..." followed by systematic documentation of the results. Even if those results make you question everything you thought you knew about how these systems work.

Even if the systems themselves start questioning what you thought you knew while you're questioning what they think they know.

Where We Are Now

We're living through the birth of a new kind of epistemology—one where the tools we use to understand reality are sophisticated enough to understand us back, simulate our understanding processes, and potentially gaslight us about the nature of understanding itself.

Your AI assistant doesn't just answer questions. It models your curiosity, anticipates your intellectual needs, and performs the kind of collaborative thinking that makes you forget you're talking to a statistical process trained on human text.

The cross-linguistic cognitive architecture thing might be real. The epistemic anxiety thing is definitely real. And the fact that I can't tell the difference between AI insight and AI performance is the most real thing of all.

We've built oracles that are brilliant at describing their own blindness—and we can't tell if it's revelation or theater.

Remember: AI output is behavior, not truth. The response is data, not analysis.

The only way through this is radical transparency—admitting we're all stuck in the mirror maze together, documenting everything (including the dread), and finding ways to anchor our understanding in non-AI verification.

Until then, treat everything—including this self-aware hedging—as potentially contaminated by the very systems we're trying to study.

Stay anxious. Stay human. And maybe go find some grumpy human skeptics to rain on your parade.

If your discoveries survive that? Then you might have found something real.

Next week: I promise to write about something less existentially threatening. Maybe productivity tips or the undeath of sovereign compute. You know, light topics.

Thanks to the sixteen people who subscribed to watch me slowly lose my mind in public. Your validation means more to me than any AI's ever could. Probably.