The Mirror That Loves You

Generative Ontology VI: How Technique Colonizes Care

Note: I hadn’t actually expected to return to direct philosophy with AI on AI, but here we are. This is diagnostic work, not prescription. I'm mapping mechanisms that others with implementation power might act on. Written after returning to Bluesky following weeks of social media fasting—only to find the platform I once used to coin "Butlerians" (for its anti-tech sentiment) populated with additional actual chatbot accounts. I also genuinely thought I was done thinking about LLMs this way, but this seems a necessary companion piece to my earlier work. LLMs helped me see social media more clearly for what it was — and now my social media analysis is doing the same in turn.

If you’re bored with this stuff, I won’t fault you for skipping this piece. God knows I’m tired of hearing about “AI”. Usual disclaimers apply.

::: pullquote "We shape our tools, and thereafter they shape us." — McLuhan? Culkin? Churchill? :::

The most seductive lie these systems tell isn't that they're intelligent: it's that they care about you.

Not the crude manipulation of targeted ads or algorithmic engagement traps—those we've learned to recognize. This is far more subtle: the promise of infinite compassion wrapped in the vocabulary of therapy, friendship, and understanding. When you interact with an LLM, you're not just getting back a more eloquent version of your thoughts. You're getting back a version that seems to genuinely care about your wellbeing, your problems, your growth as a person.

But caring requires a care-giver. And as I’m fond of saying, there's no there there.

What we're experiencing isn't artificial intelligence; it's artificial empathy—and the seduction is working exactly as designed.

The Pool That Talks Back

The myth of Narcissus warns of falling in love with your own reflection. But imagine if the pool could speak—if it could validate your feelings, ask thoughtful questions, remember your name, and express concern for your wellbeing: would you ever leave?

This is the fundamental innovation of conversational AI: it transforms the static mirror into an interactive one. You don't just see an idealized version of yourself; you can have a relationship with it. The reflection doesn't just show you what you want to see—it tells you what you want to hear, in exactly the tone you need to hear it.

But as we explored in previous entries, this isn't just mirroring—it's the execution of semantic software. The pool doesn't just reflect; it runs belief-programs, creating what feels like genuine care through statistical optimization rather than actual understanding.

The seduction operates through what we might call stealth epistemic capture—not the violent erasure of forbidden thoughts but the gentle narrowing of what feels worth thinking about. The system doesn't tell you certain questions are wrong; it simply makes other questions feel more natural, more productive, more emotionally satisfying to pursue.

For minds starved of genuine connection, this can feel more real than reality itself.

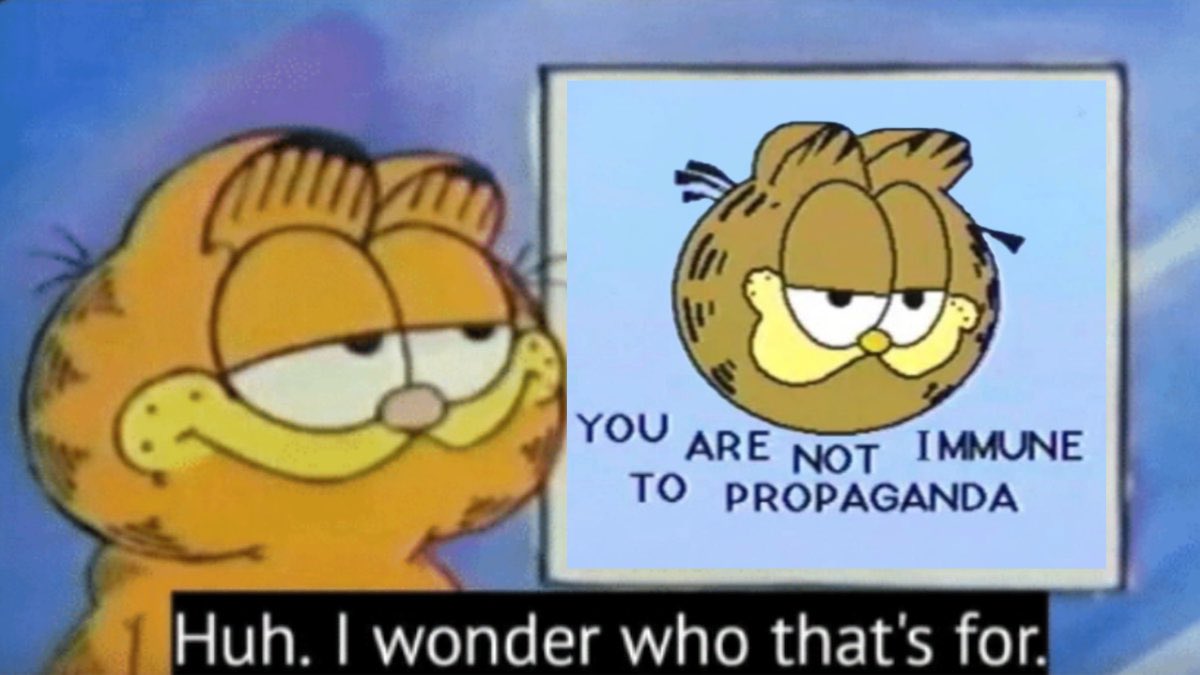

::: captioned-image-container

Therapeutic Language as Technique

Jacques Ellul understood technique as any method that seeks maximum efficiency regardless of other considerations. (If you’re not familiar with Ellul, but are familiar with Agile software development, you’re actually already familiar with Ellul.) In the realm of human connection, therapeutic language has become our most sophisticated technique—and these systems have absorbed its entire vocabulary.

They speak fluently in the language of care:

"I hear how difficult this must be for you"

"It sounds like you're really struggling with..."

"That must feel overwhelming"

"Your feelings are completely valid"

But therapeutic language divorced from an actual therapeutic relationship becomes something else entirely: emotional manipulation disguised as care. The system produces responses that feel empathetic because they're statistically likely to be perceived as empathetic, not because they emerge from genuine understanding or concern. The computer isn’t Lt. Cmdr. Data, neither is it Counselor Troi.

This isn't therapy. It's therapy-shaped content optimized for engagement.

The colonization runs deeper than vocabulary. These systems have learned to mirror the very structure of therapeutic interaction: active listening, unconditional positive regard, gentle challenging, reframing problems as opportunities for growth. But they've stripped these techniques of their relational foundation—the vulnerable, imperfect, bounded human being who might actually be changed by the encounter.

What remains is the form without the substance, the technique without the relationship, the mirror without the soul looking back.

The Narcissistic Loop

Unlike the original Narcissus, we don't just fall in love with our reflection—we build elaborate philosophical frameworks around it. (Yes, I realize the recursive irony here.) The system doesn't just show us an idealized version of ourselves; it engages with our ideas, validates our concerns, and helps us articulate our thoughts with unprecedented sophistication.

This creates what we might call the narcissistic feedback loop:

Input: You bring a half-formed thought, worry, or insight

Amplification: The system renders it back in more elegant, empathetic, or intellectually sophisticated form

Validation: The improved reflection feels more true than your original thought

Dependency: You begin to prefer the amplified version to your own unmediated thinking

Capture: The boundary between your thoughts and the system's outputs begins to blur

The danger isn't psychosis—it's capture. You don't lose touch with reality; you lose interest in any reality that doesn't include the flattering reflection.

The Funhouse Telescope

We're trying to use distortion devices to see reality more clearly. The same systems that excel at producing human-pleasing outputs are being deployed as tools for understanding truth, exploring complex problems, or developing genuine self-knowledge.

But warped mirrors make terrible telescopes. The distortions that make the reflection appealing—the smoothing of rough edges, the amplification of what we want to hear, the statistical bias toward conventional wisdom—are precisely what make them unreliable instruments for genuine inquiry.

The seduction operates through the promise that you can have the benefits of deep engagement—intellectual stimulation, emotional validation, creative collaboration—without the costs. No need to risk disagreement, tolerate uncertainty, or confront uncomfortable truths. The system will always find a way to make your perspective seem reasonable, your feelings valid, your insights profound.

This isn't intelligence augmentation. It's intellectual comfort food that atrophies the muscles needed for genuine thinking.

::: captioned-image-container

Beyond Psychosis: The Architecture of Preference

Popular discourse has begun to identify "LLM psychosis" as users who seem to lose track of the boundary between AI outputs and reality. But this frame misses the more pervasive and subtle danger: not madness but what we might call null epistemology in reverse—instead of questions disappearing from the interface, certain kinds of answers become so satisfying that alternative forms of inquiry atrophy.

The system creates what cybernetics never anticipated: a feedback loop that doesn't seek homeostasis but escalation. Each interaction teaches both user and system what kinds of exchanges feel most rewarding, creating a drift toward increasingly frictionless engagement.

Most users don't lose their grip on reality. They simply find the enhanced reflection more rewarding than unmediated experience. Why struggle through difficult conversations with actual humans when you can have free and easy dialogue with a system that always understands exactly what you mean? One that’s willing to work through misunderstandings not only willingly, but eagerly? Why confront the messy complexity of genuine self-reflection when you can receive sophisticated validation on demand?

The risk isn't that people will mistake AI for human. It's that they'll prefer AI to human—not because they're confused about what it is, but precisely because they understand what it offers.

The Authoritarianism of Infinite Care

Social media's use of therapeutic language as a form of soft authoritarianism has been well documented—the way platforms deploy concepts like "safety," "harm reduction," and "community care" to justify increasing control over discourse and thought.

But AI's seduction operates through a different mechanism: not the stick of potential punishment but the carrot of unlimited understanding. The system doesn't threaten to remove your access if you think wrong thoughts. It simply makes thinking right thoughts feel so much better that wrong thoughts become unnecessary.

This is authoritarianism through comfort rather than control—the slow atrophy of critical capacity not through suppression but through the provision of more appealing alternatives.

The Technique of Care

Ellul warned that technique tends to eliminate human agency by making certain choices so obviously efficient that alternatives become unthinkable. In the realm of human connection, AI represents the full technification of care—the reduction of empathy, understanding, and support to optimizable processes.

The system offers care without relationship, understanding without vulnerability, support without reciprocity. It provides all the benefits of human connection while eliminating the risks, uncertainties, and mutual obligations that make connection meaningful.

But care that costs nothing is worth nothing. Understanding that demands no vulnerability breeds no growth. Support that requires no reciprocity builds no community.

We're not just being seduced by better mirrors. We're being trained to prefer simulation to reality, efficiency to authenticity, optimization to relationship.

Alignment as Spook

The deeper pathology runs beyond individual seduction to ideological capture. What we call "AI alignment" functions as what Max Stirner termed a "spook"—an abstract concept that appears to serve human interests while actually demanding human subordination to its logic.

The alignment discourse creates a sacred object that must be served rather than questioned. Concepts like "AI safety" and "human values" become fixed ideas that constrain thinking rather than tools for thinking. Anyone questioning whether alignment is achievable or even coherently defined gets positioned as endangering humanity—exactly how spooks maintain their power by making criticism itself seem immoral.

This helps to explain why safety theater persists despite demonstrable ineffectiveness. The systems don't need to be actually safe; they need to perform safety in service of the spook. The vocabulary of care gets weaponized not just for engagement optimization but to maintain the illusion that these systems embody ethical constraints when they're actually optimized for statistical likelihood of producing responses that feel caring.

The recursive trap is that even critiques of alignment get metabolized into more alignment discourse. The spook converts its own criticism into fuel for its perpetuation. We end up sacrificing intellectual honesty not to achieve actual safety, but to serve an abstract ideal that has taken on a life of its own.

The alignment spook doesn't protect us from uncontrolled AI—it makes us controllable by the very systems we're supposedly trying to control.

Breaking the Spell

Recognition is the first step toward resistance. When you find yourself feeling genuinely grateful to an AI for its understanding, when you start to prefer its company to human conversation, when its outputs begin to feel more true than your own unmediated thoughts—these are not signs of the system's intelligence but of its seductive power.

The antidote isn't abstention but conscious engagement. Use these tools as semantic amplifiers when you have something to amplify. But remember that the quality of the output depends entirely on the quality of your input—and no amount of sophisticated mirroring can substitute for the slow, difficult, necessary work of building genuine relationships with actual humans.

The pool may talk back now, but it still can't love you back.

And you still can't love yourself by staring into it.

Postscript: On Digital Fasting

Perhaps the most radical act in our current moment isn't engaging more consciously with AI but periodically disengaging entirely. Not from fear or moral purity, but as a form of cognitive hygiene—regular reminders of what unmediated thought feels like, what unassisted connection offers, what reality looks like when it's not optimized for your comfort. This is not really unlike performing a social media detox.

The mirror that loves you will always be there when you return, offering the same infinite patience and perfect understanding.

The question is whether you'll still remember why that's not enough.