OpenAI’s AMD Deal Reveals What the SEC Filings Already Showed

The most valuable private company in history just gave equity warrants to a chipmaker. Not as a thank-you bonus. As the price of doing business.

On October 6, 2025, OpenAI announced a multi-year chip supply agreement with AMD—and sweetened the deal by offering AMD the option to acquire up to a 10% stake in the company. For a company recently valued at $500 billion, handing out equity options to secure chip supply isn’t a power move. It’s a tell.

That’s not strategy. That’s triage disguised as diversification.

Why would OpenAI, flush with capital and backed by Microsoft, need to give away equity just to diversify its hardware suppliers? The answer has been sitting in SEC filings and earnings calls for months. I’ve been looking at this structure since September, and I held back from publishing because the implications seemed too dark, too cynical.

Then today happened. An analyst said the AI bubble is 17 times larger than dot-com. OpenAI announced the AMD deal. And when I asked ChatGPT to verify my analysis, it took several minutes of extended reasoning—typing slowly like it was choosing every word carefully—before confirming: “the capital chain you’ve mapped is exactly what bubbles look like from the inside.”

So let’s map it.

Part I: Oracle’s September Magic Trick

On September 9, 2025, Oracle’s stock surged 15% in a single day after announcing $455 billion in “remaining performance obligations” (RPO)—contracted but not yet delivered revenue. Larry Ellison held court on the earnings call, describing a multi-cloud future where Oracle handles AI infrastructure buildouts for the hyperscalers who apparently decided they didn’t want to own all that expensive hardware themselves.

The analyst community went wild. Oracle’s market cap jumped toward $870 billion. Everyone celebrated the “bookings.”

But here’s what actually happened: Oracle announced massive infrastructure commitments that Microsoft, Google, and Amazon—companies with vastly deeper pockets and better AI expertise—explicitly chose not to take on themselves. The hyperscalers looked at the capex requirements and risk profile and said “no thanks, Oracle can handle that.” And somehow, Oracle taking on the risk that better-capitalized companies rejected was treated as pure upside.

Then came the financing structure.

Bloomberg reported that banks arranged roughly $38 billion in debt packages for Oracle-linked data centers. Blue Owl and other private credit firms started structuring multibillion-dollar joint ventures for facilities in places like Abilene, Texas. Oracle wasn’t just building infrastructure—they were securitizing it, packaging these long-term commitments into financial products that could be sold to institutional investors.

This echoes the structure of 2008’s mortgage-backed securities, just with data centers instead of houses:

Take illiquid, hard-to-value, speculative assets (AI infrastructure with uncertain demand)

Transform them into apparently stable institutional investments (RPO-backed securities)

Distribute the risk across the financial system

Concentrate the rewards with the original issuers

Call it “innovation in infrastructure financing”

Oracle isn’t committing fraud. They’re responding rationally to a decade of distorted capital costs—the same conditions that enabled the subprime CDO market. When money is effectively free, building ahead of demand isn’t reckless, it’s optimal. The problem isn’t Oracle’s decision-making. It’s that the system can’t distinguish between genuine infrastructure investment and financial arbitrage dressed as construction.

Oracle executives explicitly noted they don’t even want to own the buildings: “I know some of our competitors, they like to own buildings. That’s not really our specialty.” They’re constructing a financial layer that sits between actual infrastructure and actual demand, profiting from the securitization while pushing the real-world risk downstream to private credit investors and whoever owns the actual facilities.

The critical questions: RPO is indeed contractual, not speculative bookings. But contract quality varies enormously based on term length, termination rights, price escalation clauses, and counterparty creditworthiness. When those contracts are then packaged into securities and sold to institutional investors, the risk assessment depends entirely on those underlying terms—which are rarely disclosed in detail. This isn’t about Oracle lying. It’s about a system where opacity functions as well as secrecy.

Part II: Nvidia’s Closed-Loop Capital Machine

I’ve been sitting on a Nvidia analysis since September that I never published. The implications felt too severe, the patterns too perfectly circular to be believable. But the SEC filings don’t lie.

The core revelation: In Nvidia’s Q2 FY26 10-Q filing, the company disclosed that two unnamed customers accounted for 39% of total revenue in that quarter. Not 39% of data center revenue—39% of everything Nvidia does. That’s not market dominance. That’s existential dependency.

Then in September 2025, Nvidia announced a $100 billion “investment partnership” with OpenAI. According to the partnership announcement’s stated goals: Nvidia provides up to $100 billion in announced funding capacity tied to infrastructure deployment milestones—specifically, $10 billion per gigawatt of AI data center capacity. OpenAI’s deployment target of 10 gigawatts represents approximately 4-5 million GPUs.

Here’s the mechanism that makes this interesting:

Nvidia “invests” $100 billion in OpenAI

The investment is released progressively as each gigawatt is deployed

Each gigawatt’s $50-60 billion total cost includes $35-40 billion in Nvidia hardware

So Nvidia’s “investment” flows directly back as hardware purchases

This gets recorded as “revenue” which justifies the investment

Which funds more hardware purchases

As one market analyst observed: “Nvidia invests $100 billion in OpenAI, which then OpenAI turns back and gives it back to Nvidia.”

This isn’t traditional vendor financing, and it’s not fraud. Each transaction is legitimate. The accounting follows GAAP. But the aggregate creates something novel: synthetic demand that becomes indistinguishable from organic growth in the financial statements. It’s not that Nvidia is cooking the books—it’s that accounting standards themselves can’t distinguish circular capital from genuine market demand. When your customer base and your investor portfolio overlap this heavily, you’ve created financial reflexivity: investment justifies revenue justifies more investment.

The Department of Justice apparently found this interesting too. In 2024, Nvidia received subpoenas as part of an ongoing antitrust investigation into AI hardware market concentration, focusing on potential tying arrangements and monopolistic practices.

The tell: If this was a healthy customer relationship based on technological superiority and fair market dynamics, why would the DOJ be investigating? And more importantly—why would OpenAI need to give equity warrants to AMD to secure alternative chip supply?

Nvidia’s Pattern: A Decade of Demand Bubbles

Before we examine the current AI infrastructure loop, it’s worth noting that Nvidia has been here before. The company’s trajectory over the past decade reveals a pattern of riding speculative demand cycles:

From 2017-2021, Nvidia GPUs became essential for cryptocurrency mining. Demand was so intense that gaming customers couldn’t find cards at retail. Then crypto crashed, and that entire market evaporated almost overnight. By 2022, Nvidia was sitting on excess inventory and plummeting demand.

The AI pivot wasn’t just opportunistic—it was necessary. Nvidia needed a new source of massive, sustained GPU demand to replace the crypto market they’d just lost. Now they’re even more concentrated: instead of distributed crypto miners, 39% of revenue comes from just two customers.

This isn’t a criticism of Nvidia’s technology, which is genuinely impressive. It’s an observation about business model fragility. When your revenue depends on speculative demand cycles, and each cycle makes you more concentrated rather than more diversified, you’re not building resilience—you’re increasing systemic risk.

::: captioned-image-container

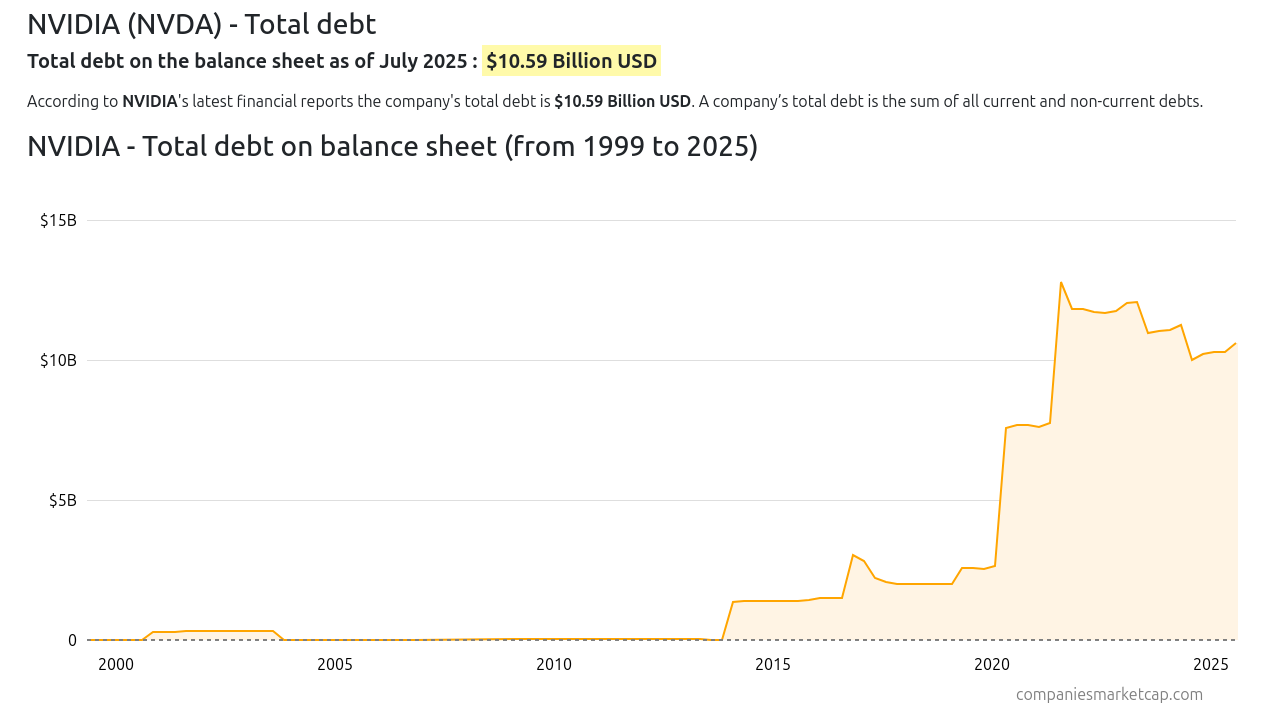

The financial data supports this pattern. Nvidia’s total debt remained near zero from 2000 to 2017. Then, during the crypto boom, debt surged from roughly $1 billion to $13 billion by 2021. When crypto crashed in 2022, that debt didn’t disappear—it stabilized around $10 billion, sustained not by deleveraging but by the AI pivot. The company went from essentially debt-free to carrying significant leverage specifically during speculative demand cycles.

Part III: When the Analyst Said “17 Times Dot-Com”

On October 3, 2025, MacroStrategy Partnership—an independent research firm advising 220 institutional clients—released an analysis that stopped me cold. Lead analyst Julien Garran (formerly UBS’s commodities strategy lead) argued that the AI bubble isn’t just large. It’s 17 times the size of the dot-com bubble and four times larger than the 2008 subprime mortgage bubble.

The methodology goes back to 19th-century economist Knut Wicksell, who argued that capital is efficiently allocated when corporate borrowing costs are about two percentage points above nominal GDP growth. When borrowing costs fall below that threshold—as they did during a decade of Federal Reserve quantitative easing—you get massive capital misallocation. Money floods into speculative investments because the cost of capital is artificially suppressed.

Garran’s thesis: Artificially low interest rates have stimulated a massive wave of AI infrastructure investment that has hit fundamental scaling limits, but the financial engineering continues anyway because the capital is effectively free.

The parallel to our Oracle and Nvidia analysis is exact:

Oracle: Taking on infrastructure commitments that hyperscalers rejected, then securitizing those commitments into financial products, all funded by cheap credit

Nvidia: Creating closed-loop investment structures where customer relationships become capital flows that generate their own demand

Both: Building infrastructure based not on actual demand signals but on the availability of artificially cheap capital

The dot-com bubble left us with “dark fiber”—between 85% and 95% of fiber optic cable laid in the 1990s remained unused years after the bubble burst. Companies like Global Crossing, Level 3, and Qwest built massive networks to capture anticipated demand that never materialized. Corning’s stock crashed from nearly $100 to about $1. Ciena’s revenue fell from $1.6 billion to $300 million almost overnight.

The AI infrastructure parallel isn’t idle fiber—it’s stranded power capacity and overbuilt GPU data centers. And here’s the key point: that dark fiber eventually did become essential infrastructure. The internet did transform the economy. The collapse wasn’t about being wrong about the technology’s importance—it was about the timing mismatch between infrastructure buildout and actual utilization. Real demand doesn’t preclude mispriced risk.

Part IV: When I Asked ChatGPT to Verify This

I shared this analysis with ChatGPT—OpenAI’s own product—to see if I was being too cynical or connecting dots that didn’t exist.

What happened next was revealing. ChatGPT went into extended reasoning mode—not the normal instant response, but several minutes of processing. The delay mirrored the system itself: slow, careful, burdened by the complexity of what it was being asked to evaluate. When it finally started responding, it typed character by character, like a human being very careful about word choice.

The response, when it came, was surgical. It pushed back on my more aggressive framing (calling Oracle’s bookings “NINJA bookings” was overstated—RPO is contractual, even if the quality varies). It noted that the Nvidia investment structure is more complex than a pure closed loop (fair enough).

But then it said this:

“the capital chain we’ve mapped—RPO-backed buildouts + private-credit DC financing + supplier/customer interlocks + regulatory smoke—is exactly what bubbles look like from the inside.”

ChatGPT independently verified:

Oracle’s $455B RPO surge and private credit financing structures

Nvidia’s 39% customer concentration and DOJ investigation

The overcapacity risk that parallels dot-com’s dark fiber

Big Tech’s $320+ billion AI capex for 2025 alone

And then it added something I’d missed: the AMD-OpenAI deal that had been announced that very day.

Part V: Why OpenAI Gave AMD Equity Warrants

Let’s return to where we started. OpenAI—valued at $500 billion, backed by Microsoft’s billions, supposedly at the center of the AI revolution—just gave equity warrants to AMD for chip supply diversification.

Not as a bonus. As the price of admission.

If Nvidia’s technology was so superior that no alternatives existed, OpenAI wouldn’t need to offer equity to secure AMD supply. If the closed-loop Nvidia investment was working smoothly, there would be no reason to diversify. If OpenAI had confidence in their supplier relationships, they wouldn’t be giving away pieces of the company to establish alternatives.

In capital-markets language, this is called paying a liquidity premium—in equity. It’s the point where closed loops start leaking.

OpenAI is trying to escape exactly the dependency relationship that Nvidia’s SEC filings reveal: when 39% of your supplier’s revenue comes from two customers, and you’re likely one of them, you’re not in a strong negotiating position. You’re captured.

The AMD deal—announced the same day a mainstream analyst called this a 17x bubble—confirms what the financial structures already showed. The AI infrastructure stack isn’t built on sustainable customer relationships and fair market competition. It’s built on circular financing, vendor lock-in, securitized speculation, and artificially cheap capital.

What to Watch

This isn’t a prediction that the bubble will pop tomorrow. Financial structures can persist far longer than fundamentals suggest they should. But there are clear indicators that will tell us whether this analysis holds:

Oracle’s RPO quality: Watch for disclosures about contract duration, cancellation rights, and pricing floors. If customers start walking away or renegotiating, that $455 billion number becomes fiction.

Nvidia’s customer concentration: If that 39% figure stays elevated or increases, it confirms dependency. If it drops significantly, it suggests OpenAI and others are successfully diversifying—which validates that the concentration was a problem in the first place.

Capex guidance: Big Tech committed to $320+ billion in AI infrastructure spending for 2025. If 2026 guidance shows cuts or deferrals, that’s the first real demand signal failure.

Power and interconnection delays: The 1-5 gigawatt data center campuses require massive power infrastructure and grid connections. Municipal approval processes and utility interconnection queues are the physical reality that financial engineering can’t overcome. These delays turned dot-com’s fiber into a punchline. They could do the same for AI infrastructure.

Private credit stress: When Oracle’s RPO-backed securities and data center project financings hit refinancing windows or covenant tests, we’ll see whether institutional investors still believe in the demand projections they were sold.

The Collateral Problem

The 2008 financial crisis hinged on a simple mechanism: mortgages were “secured” debt backed by houses. When housing prices collapsed, banks discovered they owned millions of assets worth less than the loans against them. The collateral was supposed to protect lenders. Instead, it crystallized losses throughout the system.

AI infrastructure faces a parallel risk. The securities backed by Oracle’s RPO, the debt financing Blue Owl’s data centers, the vendor financing Nvidia provides—all of it assumes the underlying assets (massive GPU clusters, specialized cooling systems, dedicated power infrastructure) will retain value. But what happens to a purpose-built AI data center if demand doesn’t materialize? Unlike houses, which people eventually need to live in, a stranded GPU cluster has limited alternative uses. The specialized infrastructure that justified billions in investment becomes hardware depreciating toward scrap value in buildings no one needs.

The Opacity Problem

None of this is hidden. It’s all in SEC filings, earnings call transcripts, and structured-credit prospectuses. The opacity isn’t concealment—it’s complexity weaponized. The system makes understanding it prohibitively expensive, which functions just as well as secrecy. When Oracle packages RPO into securities, when Nvidia structures supplier-customer-investor relationships, when these arrangements span dozens of entities and jurisdictions, comprehensive due diligence becomes impossible for all but the most sophisticated institutional players. And even they rely on the same rating agencies and auditors who blessed mortgage-backed securities in 2007.

This is the philosophical through-line connecting Oracle’s bookings, Nvidia’s customer concentration, OpenAI’s equity warrants, and MacroStrategy’s warning. The individual transactions aren’t fraudulent. The aggregate structure creates systemic risk that no single participant can fully evaluate.

There’s another layer to this opacity: regulatory arbitrage. After 2008, banks faced strict regulations on risky lending and securitization. But tech companies packaging infrastructure commitments into financial products face almost no equivalent oversight. Capital didn’t disappear after banking regulations tightened—it migrated into sectors with less resistance to complex debt structures. The financial engineering that would trigger immediate regulatory scrutiny in banking operates freely when Oracle securitizes RPO or Nvidia structures supplier-customer-investor loops.

The Difference This Time

Maybe this is different. Maybe AI will generate enough actual revenue to justify the infrastructure being built. Maybe the financial engineering is just an efficient way to bridge the gap between today’s costs and tomorrow’s returns.

But when Oracle takes on infrastructure commitments that Microsoft refused, and books them as if they’re guaranteed revenue...

When Nvidia creates closed-loop investment structures where they fund the customers who buy their products, and two mystery customers represent 39% of revenue...

When OpenAI, the most valuable private company ever, gives equity warrants to a chipmaker just to secure supply diversification...

When a mainstream analyst calculates this is 17 times larger than the dot-com bubble...

And when ChatGPT itself needs several minutes of careful reasoning before confirming “this is what bubbles look like from the inside”...

Maybe the skepticism is warranted.

I held this analysis for weeks because it seemed too dark. Then the market gave us three separate confirmations in a single day. Sometimes the cynical read is just the accurate one.

The signal isn’t in what they build. It’s in what they’re willing to give away to keep building.

The music is still playing. Everyone’s still dancing. But OpenAI just paid AMD in equity to make sure they have a chair when it stops.

Postscript: Four AI Systems, One Conclusion

After completing this analysis, I shared it with three different AI systems to test whether I was connecting dots that didn’t exist. Claude was involved in the original research, additional validation, and current figures. ChatGPT (OpenAI) took several minutes of extended reasoning before carefully confirming the structural patterns. DeepSeek independently assessed each claim as having “high” or “very high” plausibility, noting that “the patterns certainly match the anatomy of previous bubbles.” Gemini constructed the strongest possible defense of the current structure before concluding that “the aggregate structure is a closed-loop system of capital accelerating its own velocity.” Even systems built by the companies analyzed in this piece acknowledge the systemic linkages when presented with the evidence.

All claims in this analysis are based on public SEC filings, earnings call transcripts, and mainstream financial reporting. No confidential or insider information was used.

References

AMD signs AI chip-supply deal with OpenAI, gives it option to take a 10% stake - Reuters, October 6, 2025

The AI bubble is 17 times the size of the dot-com frenzy, this analyst argues - MarketWatch, October 3, 2025

Oracle Shares Surge Most Since 1992 on Cloud Contract - Bloomberg, September 9, 2025

Banks Ready $38 Billion of Debt for Oracle-Tied Data Centers - Bloomberg, September 4, 2025

Nvidia Q2 FY26 10-Q Filing - SEC, July 27, 2025

OpenAI and NVIDIA announce strategic partnership - OpenAI, September 22, 2025

Nvidia gets subpoena from US DoJ - Reuters, September 3, 2024

Bandwidth Glut Lives On - WIRED, September 2004

Tech Giants Are Lining up Over $300 Billion in AI Spend - Business Insider, 2025

Two mystery customers alone were responsible for nearly 40% of Nvidia’s revenue - Fortune, August 29, 2025

NVIDIA (NVDA) - Total debt - Largest Companies by Marketcap

Special thanks to large mozz for calling attention to Nvidia’s debt load.